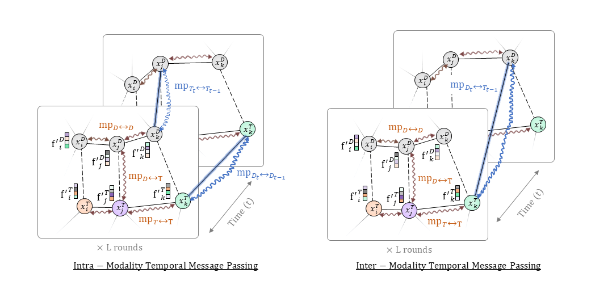

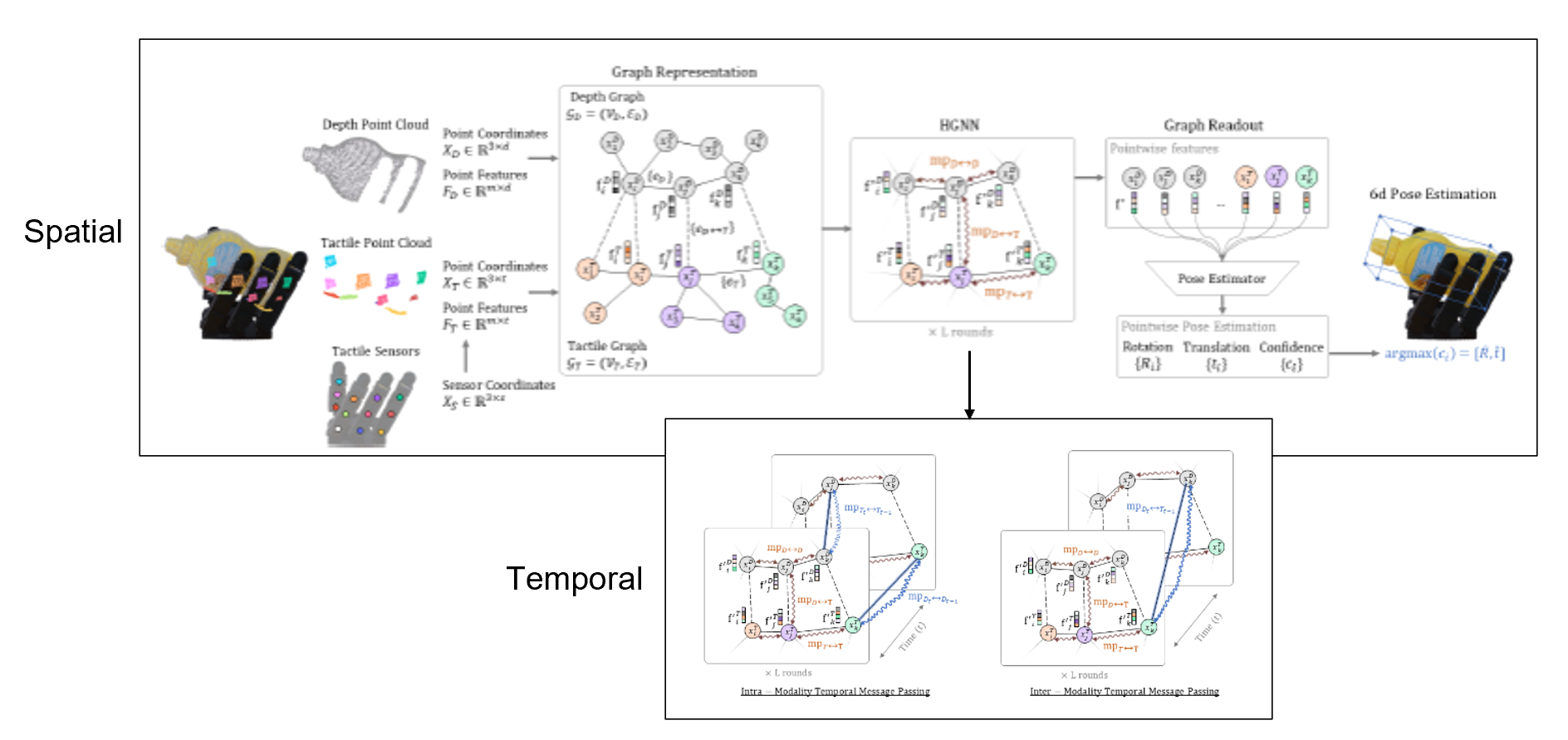

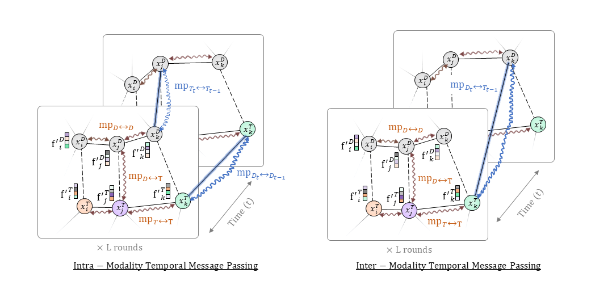

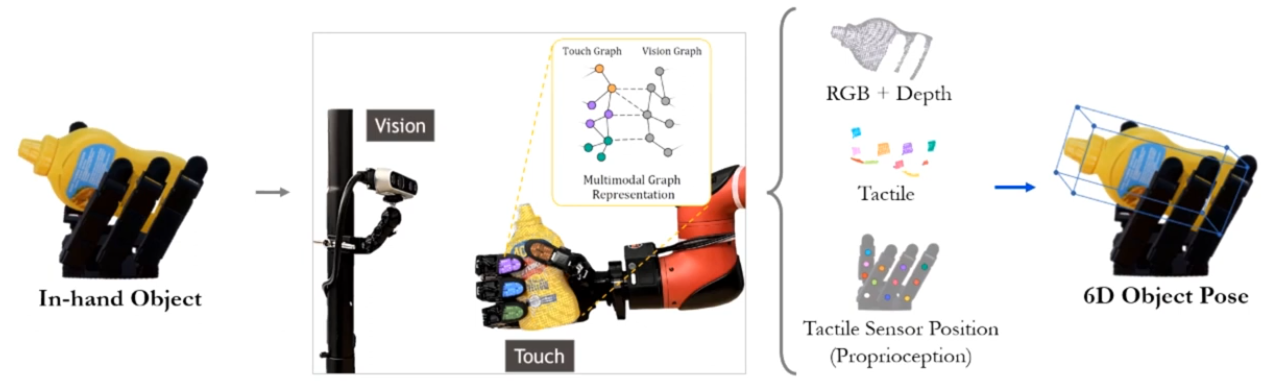

DynastGNN: Dynamic Spatio-Temporal Hierarchical Graph Neural Network for Visuotactile 6D Pose Estimation of an In-Hand Object

Snehal Dikhale, et al.

Paper in Progress, 2025

Robotics Researcher @ Honda Research Institute

The industry has mastered seeing. I want to give robots the ability to truly feel. That means cracking tactile representation and using it to unlock dexterous manipulation.

Right now I'm extending Vision-Language-Action architectures with touch, connecting foundation models all the way down to real hardware.

I'm equal parts researcher and engineer, and I genuinely love both. I call it hardware intuition. Always happy to connect, whether it's research, life, or just geeking out about robots.

Researcher by Nature, Engineer by Practice

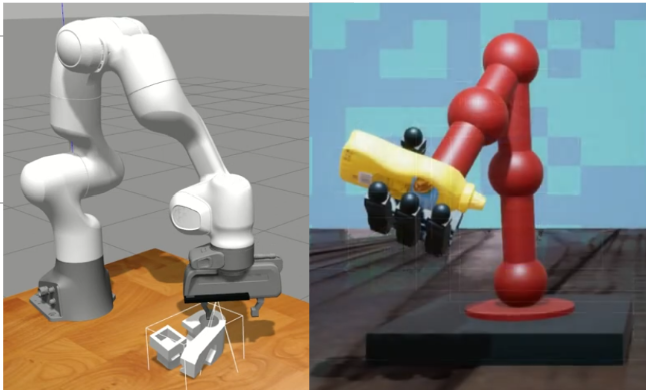

Simulation

High-fidelity physics modeling

Domain randomization

Sim-to-real transfer

Algorithm

Multimodal learning (Vision + Touch)

Graph neural networks

Representation learning

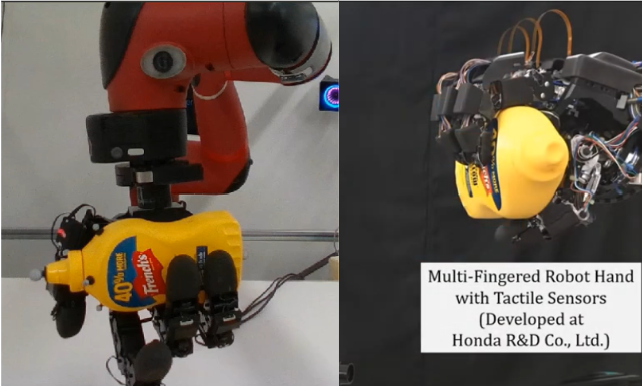

Hardware

Real-world deployment

Tactile sensor integration

Multi-fingered manipulation

Honda Research Institute · Apr 2024 – Present

25%

30%

3

Honda Research Institute · Sep 2020 – Apr 2024

65%

3×

100+

Worcester Polytechnic Institute · 2018 – 2020

78%

3.8

4

S. Zhao*, S. Dikhale, et al.

* Mentored Intern

US Provisional Patent, 2025

Provisional PatentA. Shahidzadeh*, S. Dikhale, et al.

* Mentored Intern

US Provisional Patent, 2025

Provisional Patent

Snehal Dikhale, et al.

Paper in Progress, 2025

Research & AI

Software & Frameworks

Simulation & Tools

A personal essay on what it really means to build a career in robotics — the doubt, the obsession, the hardware that breaks at 11pm, and why I'd choose it all over again.

Read on Medium